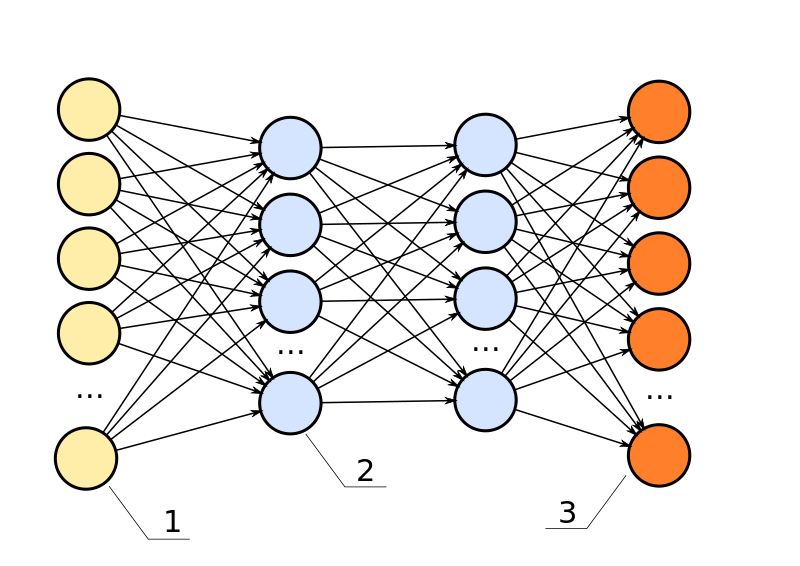

Deep learning algorithm is an artificial neural network (ANN) which has many hidden layers. Neural network is inspired by how our brain works. Each of its node acts as a neuron - it takes input from previous layer and feeds output to nodes in the next layer of neural network.

Deep learning is a powerful learning algorithm used for both supervised and unsupervised learning. In recent years, deep learning has seen substantial success because abundance of data, cheap computation and advancement in optimization algorithms.

Following are 3 important aspects of deep learning algorithm one must work on carefully to get most out of it;

1. Weight Initialization:

One of the problems in training deep neural network is vanishing or exploding gradients caused by weight initialization. If the weights are too big, it will exponentially increase activation values (as functions of number of layers) of a deep network. Conversely, very small weights will exponentially decrease activation values. In similar fashion, the gradients of deep network will increase or decrease exponentially making training difficult.

This problem can be partially fixed through careful random weight initialization, like Xavier initialization etc. These methods try to keep weights neither too large nor too small (by lowering the variance of weights).

2. Overfitting

Neural network has overfitting or high variance problem if test error is too high and training error is low. There are two potential ways to deal with overfitting problem: a) get more training data and b) regularization. Since more data is often not readily available, you might have to try out regularization to fix overfitting problem.

Regularization is a method to reduce impact of hidden units by penalizing weights. Regularization is achieved through optimization of hyper-parameter lambda. Inverted dropout is another method to address overfitting problem. In dropout, we randomly take of some of the hidden units during forward and backward propagation. This dictates learning algorithm not to depend on any of hidden units too much as they may disappear.

3. Backpropagation:

Backpropagation is a method to compute gradients of weights during network training. Implementation of backpropagation is the hardest part of deep learning algorithm. It is very likely to introduce errors in calculation of gradients. Gradient checking is a way to avoid bugs in implementation of backpropagation and make sure you are calculating gradients correctly. In gradient checking, we basically numerical approximate the gradients and check how much they are off from the ones calculated through backpropagation. If the difference is within acceptable threshold, we are good otherwise there is a need to debug the code.

Author: Nasir Mahmood, PhD