Computer vision has seen great success during last few years, mainly due to advances in deep learning. Applications like unlock of phone and house doors using face recognition, and classification of pictures in smartphone's gallery app are all direct consequences of application of deep learning in computer vision.

To be specific, application of deep learning has been very successful on computer vision problems such as;

- image recognition

- image classification with localization

- object detection

- neural style transfer

High dimensional feature vectors (due to large sized images) is one of the challenges when we apply deep learning to computer visions. It is impossible to train regular deep neural network on even an average sized images. To address this challenge, machine learning community has come up with an exceptionally successful technique, convolutional neural networks (CNN).

Convolution Operation

Convolution operation is a fundamental building of convolutional neural networks. It allows network to detect horizontal and vertical edges of an image and then based on those edges build high-level features (like ears, eyes etc.) in following layers of neural network.

Convolution operation involves an input matrix and a filter, also known as kernel. Input matrix can be pixel values of a grayscale image whereas a filter is relatively small matrix which detects edges by darkening areas of input image where there are transitions from brighter to darker areas. There can be different types of filters depending upon what type of features we want to detect, e.g. vertical, horizontal or diagonal etc.

In convolution operation, we start by superimposing a filter onto the input matrix at top-left corner and then do element-wise multiplication and add up all the values. The output value is written to top-left cell of the output matrix. Next we shift our filter by one element to the left and repeat same multiplication and addition to get next output value. During shift operation when we hit the right boundary of input matrix, we shift one element down and move all the way back to the right boundary of input matrix. We continue this until we reach to the bottom-right of input matrix.

Do we need to write code for convolution operation by ourselves?

Fortunately, the answer is 'No'. The main machine learning frameworks such as TensorFlow, Keras etc. come with convolution operation. We simple need to give them input matrix and filter (along with other parameters which we will discuss later) and they will give us back output matrix.

Do we need to specify values of a filter?

No, because in deep learning these values (weights) can be learned by learning algorithm through backpropagation.

Padding

Padding is an extended form of convolution operation. In deep neural networks, we do convolution operation with padding to get output matrix of same size as that of input matrix. Let's say out input matrix is 6x6 and we convolve it with a filter of size 3x3. Without padding the output matrix would be 4x4 because there are only 4x4 places to superimpose filter on input matrix. That gives following formula;

$$ (n-f+1) \times (n-f+1) $$

The straight convolution operation has two downsides;

1) it shrinks image each time we convolve, and

2) the corner pixels of the input matrix are looked at only one time as it is touched once by the filter. On the other hand, the middle pixel of 6x6 input matrix sees many overlaps with 3x3 filter. That means we are throwing away information of edge pixels by underusing them in convolution.

Imagine, we have a 100-layered neural network and convolve at every layer, then eventually we would have a very small shrunk image.

We can fix this problem by padding the input image before applying convolution operation. Typically we pad one additional pixel to the border. This would give us output matrix of same size as input matrix;

$$n+2p-f+1 \times n+2p-f+1 \times$$

Valid and Same Convolution

Convolution operation without padding is called valid convolution. The one with padding is known as same convolution - the output size is same as input because of padding . Typically value of $p$ is chosen by formula

$$p = \frac{f-1}{2}$$

Typically, convolution filter size is odd because it makes padding size easier to chose.

Strided Convolution

Strided convolutions is another variant of convolution operation and basic building block of CNN. In strided convolution operation, we use stride (s) when we shift the filter over input matrix.

Straight convolution operation has stride, $s=1$, as we only shift filter by one column or row (after hitting the right boundary of input matrix). However, strided convolution has $s$ is greater than 1 as it steps over by 2 or move columns and rows.

We can calculate output values in similar fashion as in straight convolution i.e. element-wise product and then sum of all product values. The dimensions of output matrix are governed by

$$\frac{n+2p-f}{s} \times \frac{n+2p-f}{s}$$

where

$nxn$ - dimensions of input matrix,

$fxf$ - dimensions of filter,

$p$ - is padding,

$s$ - strides

If $\frac{n+2p-f}{s}$ is not an integer, we will round it down.

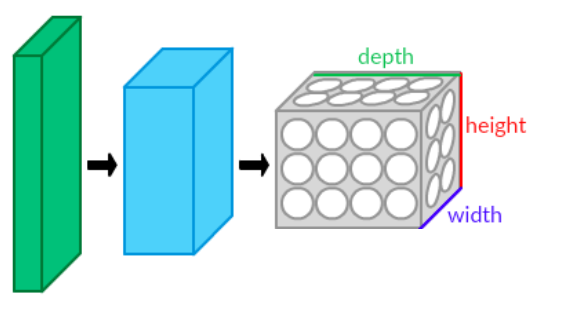

Convolution over Volume

Colour RGB images have 3 channels, red, green and blue. In order to features in such images, we use 3D filters with additional dimension for colour channels. The filter itself has 3 layers corresponding to three colour channels.

$$H \times W \times C$$

Number of channels (C) in input image and filter must match, like

$$6 \times 6 \times 3 * 3 \times 3 \times 3 = 4 \times 4 $$

To compute output, we superimpose each filter layer to corresponding input layer, take product and then add up 27 numbers to one. The shift operation is similar to 2D convolution. You can image 3-layered filter as a volume which to corresponding input volume and then shrunk to one element of output matrix (through multiplication and addition of 27 products).

Multiple Filters

So far we have discussion application of one filter for convolution operation. Let's say we want to detect N types of different edges in input image. That would require us to have N filters and convolve them to input image. If we have two 3x3x3 filters and an 6x6x3 input, the convolution operation will give us two 4x4 output layers i.e. 4x4x2 volume.

$$ n_{h} \times n_{w} \times n_{c} * f \times f \times n_{c} = n_{h}-f+1 \times n_{w}-f+1 \times n_{c} $$

The idea of convolutions over volume is very powerful. This allows us to operate on RGB channels and at the same time detect multiple types of edges.

One Layer of Convolutional Network

We have discussed the core concept of convolution operation and related techniques of padding, strides and convolution over volume. Now let's see how we can put them together in one layer of convolutional network.

Let's say we have 3D input (6x6x3) and are only interested in 2 types of features/edges. Consequently we decided to convolve input with two filters (3x3x3) i.e. convolution over volume. This would give us two 4x4 outputs. In convolutional neural network, we will add bias to each of two outputs and then put them through relu non-linearity function. The final two ouput (4x4) matrices when stacked up would give us a output volume (4x4x2).

If you remember, a typical layer of neural network has following 2 operation (linear function and non-linear relu);

$$ z^{[1]} = w^{[1]}a^{[0]} + b^{[1]}$$

$$a^{1} = g(z^{[1]})$$

In convolutional network, $a{[0]}$ is 3D input matrix and the two filters are $w{[1]}$. The ouput volume we get after applying relu non-linearity is activation $a^{[1]}$.

This is how we go from $a^{[0]}$ (6x6x3) to $a^{[1]}$ (4x4x2). First we apply convolution operation to compute linear function ($z^{[1]}$) and then add biases to the output matrices and put them through relu non-linearity to get $a^{[1]}$.

In this example, we have two filters in order to detect two features. In practice, we may have a much larger input matrix and hundreds of filters. As we add more number of filters we add, the depth of output volume will increase.

The filters and biases represent parameters of layer of convolutional network. For instance, if we have ten 3x3x3 filters the total number of parameters would be 280, where each filter contributes 28 (3x3x3 filter values + 1 bias) parameters. It is important to note that even if the size of input image increase the number of parameters will not change. This makes convolutional neural networks less prone over-fitting.

Pooling Layers and Max Pooling

Pooling layers in convolutional neural network allow us to reduces representation size and speed up the computation. It also helps us to detect some of features in more robust matter. Though there can be different types of pooling, max (maximum) pooling is the most commonly used in ConvNets. Pooling layer often has two hyperparameters;

f = size of max filter

s = size of strides

$f = 2$ and $s = 2$ are common choice of hyperparameters in pooling layer. With these parameters, pooling layer shrinks height and width of representation by a factor of 2. Hyperparameter of padding, $p$, is rarely used in pooling layers. Input to pooling layer is a volume, such as

$$ n_{h} \times n_{w} \times n_{c}$$

The convolution formula to compute output size of max pooling stays the same;

$$\frac{n_{h} + 2p -f}{s} + 1 \times \frac{n_{w} + 2p -f}{s} + 1 \times n_{c}$$

Max pooling has been used quite effectively by machine learning community. The intuition behind max pooling is to detect and preserver a particular feature from input convolution layer of neural network. This feature may exist in upper left quadrant of input. What maximum operation does is it preserves the target feature by keep a high number in output. If that feature does not exist, the corresponding max number output would be low.